Artificial Intelligence is often discussed in terms of algorithms and software.

But at scale, AI is limited not by code —

it is limited by physics.

Power density.

Thermal load.

Signal integrity.

Mechanical stability.

Electromagnetic interference.

Behind every AI accelerator, server rack, and data center module lies a material system quietly carrying the burden.

This is how we think about materials in AI hardware engineering.

1️⃣ AI Hardware Is a Thermal Problem First

Modern AI chips operate at extremely high power densities.

Training clusters push:

- High current density

- Continuous heavy workloads

- Tight packaging constraints

From a material perspective, the key challenge becomes:

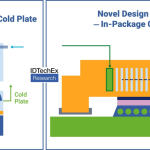

How do we move heat faster than we generate it?

This drives demand for:

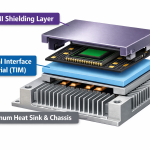

- High thermal conductivity interfaces

- Lightweight heat spreaders

- Carbon-enhanced aluminum systems

- Advanced TIM materials

- Electrically conductive yet thermally optimized layers

Material thinking here is not optional — it is structural.

2️⃣ Power Integrity and Signal Stability

AI hardware requires:

- High-frequency signal transmission

- Stable grounding

- Low EMI interference

At these frequencies, material choice directly affects:

- Surface conductivity

- Shielding effectiveness

- Contact resistance

- Long-term reliability

Carbon-based coatings, graphene films, conductive composites —

they are not just additives.

They become part of the signal architecture.

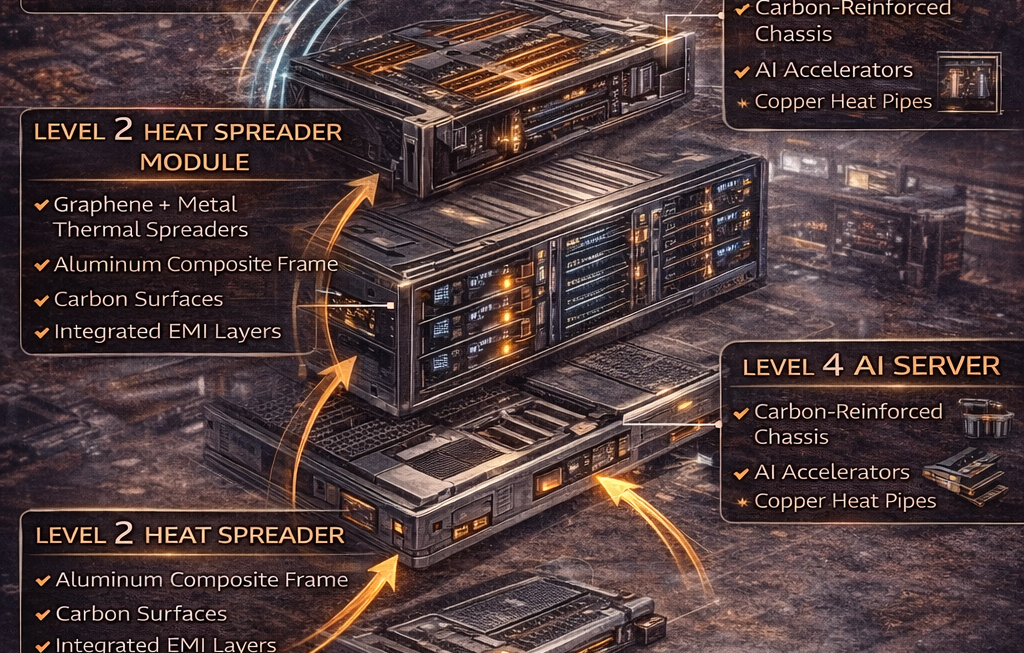

3️⃣ Weight, Structure, and Modularity

Large AI server systems must be:

- Modular

- Rack-compatible

- Mechanically stable

When systems scale, even small weight reductions matter.

Materials are evaluated not only for performance, but for:

- Stiffness-to-weight ratio

- Vibration tolerance

- Assembly compatibility

- Corrosion resistance in data center environments

A good material improves more than one variable simultaneously.

4️⃣ Manufacturing Compatibility Over Peak Performance

In AI hardware engineering, the best-performing material rarely wins.

The material that wins is:

- Process-compatible

- Scalable

- Supply-stable

- Consistent across batches

A 20% theoretical improvement means little if it disrupts production yield.

Engineering teams prioritize:

Predictable integration over experimental superiority.

5️⃣ System-Level Thinking: Materials as Enablers

We do not ask:

“Is this graphene conductive enough?”

We ask:

- Does it reduce overall cooling cost?

- Does it improve rack density?

- Does it simplify EMI design?

- Does it lower total system energy consumption?

AI infrastructure is a system-of-systems.

Materials that enable architectural simplification create real value.

The Shift in Mindset

Traditional material development focuses on:

- Highest conductivity

- Lowest resistivity

- Strongest mechanical properties

AI hardware engineering focuses on:

- Integration friction

- Thermal bottleneck relief

- Multi-functionality

- System-level ROI

This is a different language.

The Real Question

In AI hardware, materials are not chosen because they are advanced.

They are chosen because they remove constraints.

When a material:

- Improves heat dissipation

- Maintains signal integrity

- Reduces weight

- Remains scalable

It becomes an infrastructure enabler.

And in AI, infrastructure wins.